Synapse

By Dan Leach

Synapse

By Dan Leach

Professor Michael Casey stands at the front of the room ready to give the lecture. There is an energy behind the eyes - the look of a man who wants to let everyone in on a secret.

What he is about to tell this host of students and professors at Goldsmiths’ University, London, might, a few hundred years ago, have seen him accused of witchcraft. While most people dismiss psychics, astrology, and tarot card readers, as hokum, science has quietly gone ahead and achieved something directly from this realm - Michael Casey can read your mind...

As the Professor of Music and Computer Science at Dartmouth College (the renowned Ivy League research university based in New Hampshire, U.S.A.), his research has enabled him to play back the sounds from someone's mind by reading their brainwaves.

Astonishing as that sounds, it is just one of the achievements from the field of music psychology, which also includes breakthroughs in treating mental disease and pain-reduction.

By investigating such phenomena as the emotional effects of music, or our ability to recall songs not heard for decades, scientists have been able to further our understanding of how we process language, how we learn, and how the structure of the brain can change. In other words, music offers a framework by which researchers can understand how the brain works.

Perhaps this should not be surprising. After all, music is part of the fabric of our society. We chart historical events with it. It allows us to express ideas that we would have difficulty otherwise communicating. Some of us might even see in it the existence of God.

In this feature, we will see that, by investigating our relationship with music, these researchers are touching upon what it means to be human, what divides us from the rest of the animal kingdom. But as technology advances, are they crossing a line that will force us to redefine the very essence of that humanity?

Cheesecake

Cheesecake

Is the existence of music proof of the human soul? Like the '21 grams' theory, Dr Duncan MacDougall's infamous idea that the loss of weight when someone dies is evidence of the soul leaving the body, does music show that there is more to us than biology? Or can it be explained purely in scientific terms?

"I think everything can be explained in scientific terms", says Oxford-educated Professor Lauren Stewart, head of the Masters programme at Goldsmiths University in London ‘Music, Mind, Brain’.

"How much of it we can do now, I'm not sure. For instance, one theory about why music can trigger our emotions has been explored in some depth by Leonard Meyer in the 50s and, more recently, David Huron, a musicologist and cognitive psychologist, in his book, 'Sweet Anticipation', which examines the relationship between prediction and emotion.

“Huron was not the first person to say this but probably the first person to come up with testable hypotheses."

Prediction

Huron's theory is essentially this: evolution has programmed humans to get a sense of reward when we predict events accurately because this increases our chances of survival. As a crude example, if I can predict that the deer I am hunting will turn left and not right then I can kill it and feed my family. Music is pleasurable to us because it sustains our need to predict successfully. When it surprises us (defies our prediction) it elicits in us “laughter, frisson or awe” which comes from our natural response: fight, flight, or freeze (once we realise it is a non-threatening situation).

An example of this is found in tonality and meter. Whether we like one note over another depends on the tonal context of that particular song (ie. notes heard in one song sound terrible, but in another they sound fantastic). In the same way, we prefer notes to happen at predictable times (meter) rather than occurring randomly. This is again contextual. The context sets the expectation and therefore the quality of our attempts at prediction.

Stewart says: "Prediction and anticipation seems to be the middle-man between musical events and our emotional response to them. I think it's not the only thing going on, because you also have memory mechanisms and personal associations to particular songs and you've got lyrics and certain conventional properties of different keys and tempos that play into it as well, but I think anticipation is a nice, cognitive mechanism that explains where emotional response comes from in music."

We enjoy music not just for the direct pleasure, but also because of a range of other reasons: the ideas a song expresses which might challenge, excite, or comfort; the relationship people build with their favourite singer; the sense of belonging to a particular musical movement; the shared human experience of live music; and the connection between a memory and a song.

Huron admits his theory only covers the pleasure we get from expectation and does not include other factors that contribute to our engagement with music. "But," he says, "without a significant dose of pleasure, no one would bother about music". (p.ix, Sweet Anticipation, 2006)

So why do we have music at all? What’s the point?

“That's a controversial and very big question”, says Stewart. “Stephen Pinker, [the Canadian-born American psychologist and author] annoyed a lot of people because he referred to music as being pure auditory cheesecake, by which he meant there's no survival value associated with music per se.

"The reason that it's so pleasurable, and people assume it must be so important because we have these emotional responses to it, is a bit misleading in his view. He says it hijacks the pleasure circuitry of the brain, which has itself evolved for other purposes, being to seek out biologically useful things. But other people fundamentally disagree with that."

Stewart believes that music may have evolved as a social bonding mechanism. "Early humans used to exist in much smaller groups, in groups that required intensive one-to-one grooming, for instance, to keep the social bonds going. But, at some point in our evolutionary past, early humans existed in quite large social groups."

"It is now widely accepted that singing with others makes you feel close to them and even increases pain tolerance, but what was not known was if this effect scaled-up the more people there were in the group."

To prove this, Stewart recently collaborated with Robin Dunbar and his team at Oxford to research a group called "PopChoir", a collection of regional choirs which combine once a year in a singing event totalling about 250 people.

"We found that the effects were just as strong, if not stronger and, quite interestingly, it wasn't just that you feel more close to the people in your regional choir, when singing in the "megachoir", it's that you feel closer to everyone in the megachoir. Even individuals there that you've never met." (published in journal Evolution and Human Behaviour)

Popchoir Director and founder Helen Hampton said at the time: "I know of members who have been able to stop taking anti-depressants because of their new-found confidence, raised self-esteem and the new and supportive friendships they have made in the choir.

Singers reported an alleviation of symptoms of illness, from asthma, to cancer, to crippling pain and yet the truth is that science still doesn't know for sure the exact purpose of music or why it came about. Stewart adds: "There's many other theories of the role of music in evolution to do with mother-infant communication and mother-ese and the sing-song style of communication."

Brain Music

Brain Music

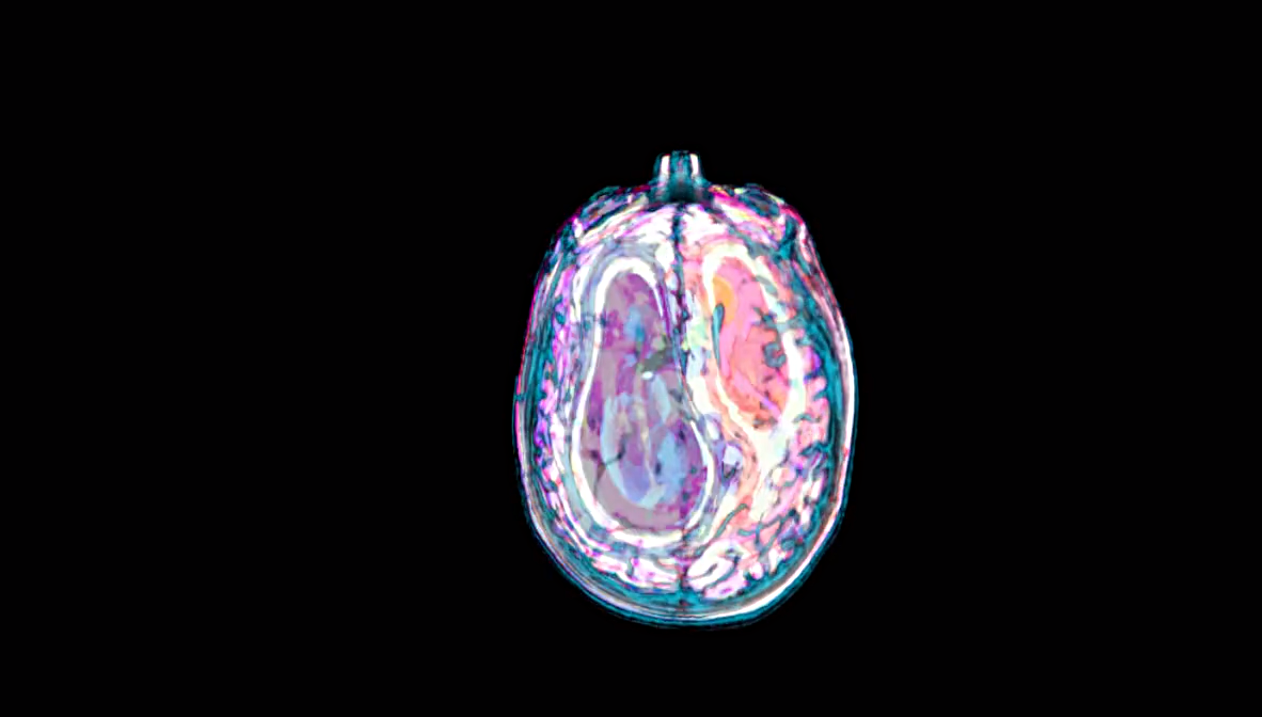

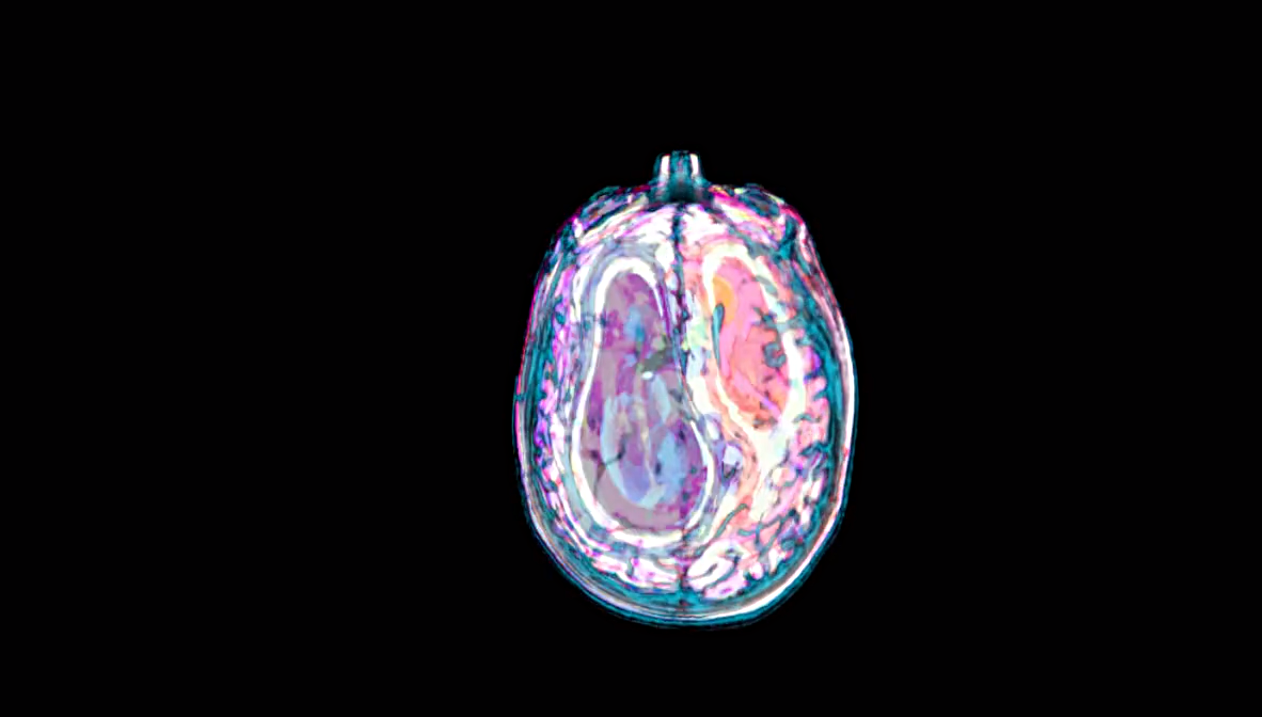

The field of music psychology took a huge shot in the arm in the 1990s with the introduction of brain-scanning technology. Psychologists had been writing seriously about music since the 1950s, but now with machines such as MRI (magnetic resonance imaging) - scanners which show when different parts of the brain are being used - neuroscience was dragging music psychology with it.

And yet, Stewart says, the two disciplines (cognitive psychology and neuroscience) remain very separate when it comes to music. Cognitive psychology is more interested in what happens, as opposed to where in the brain it happens. "I go to some music cognition conferences and I go to the music neuroscience ones and they're quite different groups of people to be honest. But, of course, I think the best stuff happens when you're testing a framework or approach from music cognition and then working out how it's implemented in the brain.

"I think you need a good reason to be doing brain imaging research. I'm always interested to know what question it's really addressing. I think we've gone past the time when it was good enough to just show that different brain areas were involved when listening to music because of course they are and everybody knows that really."

Stewart's involvement in music psychology was quite accidental. Following a masters in neuroscience at Oxford she took a research position working for Uta Frith at UCL (University College London), a German developmental psychologist made a Dame in 2012 for her work on autism and dyslexia. Stewart wanted to study how literacy changes the brain for her PhD, but finding test subjects proved to be difficult. So she used another form of symbolic notation - music.

She taught participants to play the piano to grade one level, scanning them (whilst they looked at musical notation) before and after the learning. As a result, Stewart was among the first researchers to prove the plasticity of the brain - meaning that the brain will change its structure depending on how it is used - a landmark finding which would resonate throughout the field and beyond. Here was physical evidence that you could make yourself a smarter, better, human; that sometimes we have more control over our biology than we realise.

"All this happened", says Stewart, "at a time when, internationally, music and the brain was a new hot topic. Traditional areas in cognitive neuroscience are language, attention, movement, numeracy, but no one had really been looking at music. People thought it was really quite an interesting domain that had been overlooked. It's not quite like language, it's not quite like vision, it's something else. And it started to grow."

In 2000, the Mariani Foundation was set up with the explicit purpose of bringing together scientists to further the study of music psychology. Stewart was invited to New York for the first conference, a meeting of minds which now takes place every three years in various locations.

After completing her Phd she began working on congenital amusia (commonly known as being tone deaf) with an an expert in auditory perception, Prof Tim Griffiths. This, in turn, led to a fellowship at Goldsmiths University, London, in the area of neuroscience and music for which she helped develop the masters programme, "Music, Mind, and Brain".

One of the current students is Iris Mencke who is investigating what happens when we listen to more experimental abstract music.

"I want to find out what sort of emotions are evoked by this? Do people who enjoy this kind of music develop something like a pleasure for the abstract? Also, are we able to learn, or get used to, the dissonant and a-rhythmic style, just as we get used to classical tonal musical languages?"

Her ultimate aim is to get a better understanding of the perception of art and music of the 20th century.

Meeting of Minds

The ability to measure activity in the brain has not only led to medical advances but, as the technology becomes cheaper and more portable, composers have begun to incorporate it into the art itself - from Stanford professors Chris Chafe and Josef Parvizzi converting the electrical spikes from the EEG of a seizure patient into sound (see title screen) to 'Raster Plot', composed by Eduardo Miranda, professor in ‘Computer Music’ at Plymouth University, UK, which uses rhythms generated by a computer simulation of a network of neurones (each neurone corresponding to an instrument) to mimic the way the brain encodes information.

Another of his compositions, ‘Corpus Callosum’, used material from Beethoven’s 7th symphony along with fMRI data from his own brain while he listened to the same symphony to replicate the relationship between the two sides of the human brain.

But it doesn’t stop there. In 2015 he became the first person to create a biocomputer system to make music by growing mould on a circuit board. As music is played, the mould responds by moving, thereby creating electrical activity which Miranda’s system then converts back into sound.

Frontiers

Frontiers

Professor Michael Casey's CV is somewhat intimidating. The 48-year-old, originally from Derby in the UK, has a PhD from MIT and is currently Professor of Music and Computer Science at Dartmouth. The author of six books and numerous articles, his research decoding musical sound from human brain imaging is beginning to blur the lines between computer science, neuroscience, and, indeed, science-fiction.

In 2012, he used an fMRI scanner to record the brain activity of a set of test subjects as they perceived and imagined sounds. From there, he was able to map out a pattern in such detail that it could be used to decode the brainwaves of a different set of people. He could actually recreate the sounds they were imagining and even listen to them. ((Casey et al., 2012; Hanke et al., 2015))

They were not exactly the same as the originals, but they were close enough to know that a monumental barrier had been broken. It is not being melodramatic to say that nothing will ever be the same again.

So what will be the impact of this work? One application could include composing music with only the mind, especially helpful for the physically disabled. It also affords scientists an objective view of what a person is hearing - instead of having to rely on the subject's description. Looking further forward, Prof. Casey agreed that uploading and downloading whole intellects is feasible.

New Dawn

The ramifications of this could be seismic. If human thoughts can be turned into digital data then, theoretically, it must be possible for a computer to contain human thoughts and therefore emulate a human.

‘The Turing Tests in the Creative Arts’ is designed to examine precisely this question. It is a new competition hosted by Dartmouth College’s Neukom Institute for Computational Science, named after Alan Turing’s 1950 test of a machine’s ability to exhibit behaviour indistinguishable from a human.

Competitors are invited to create a piece of software that can produce either a poem, a short story, or a DJ set, to see whether current computer technology can create art that is indistinguishable from that of a human.

But this isn’t just about testing the capabilities of computer intelligence. For Casey “it’s a wake-up call to culture: let’s see how well machines can do at doing the very things that we think are sacrosanct.

“The very idea that a computer could write a story that would have meaning to a human I think would be big news if it could happen, and I think we need to question the role of technology in culture. It’s already there, but it’s coming in insidiously.

“A lot of what you’re being recommended by sites such as Netflix is being is being mediated by algorithms. It already is a kind of artificial intelligence that’s going on behind these things, not to mention the actual production of the media itself.

“The way film scripts are vetted and the way the films are chosen to be produced goes through a process that also involves data analysis, statistical analysis, a kind of artificial intelligence to figure out which storyline should go, which actor should be in it, which particular movie, so that they can guarantee that they’re gonna make some money.”

In this context, the ‘Turing Tests in Creative Arts’ raises an ethical question. Is it wrong to analyse the creative process too deeply? The beauty of art is that it is openly accessible: anyone, rich or poor, young or old, from any background, is capable of creating and connecting to others through it. If the ability to create art is categorised and set down in a pattern to be reproduced then we are making it available for ownership and thus a commodity like everything else.

Prof Casey believes this has already happened but that culture may still reject such attempts to “own” artistic creation. He said: “It’s interesting because I think culture has a very complicated relationship between the producers and the consumers. On the one hand, businesses can target consumers and can produce product that they can predict will be successful and popular.

“On the other hand, subcultures emerge that reject that kind of manipulation and then go entirely in the opposite direction, producing a kind of a new target, if you like, so you know hipster culture and alternative culture have been pretty prevalent in the last 20 years, probably precisely because of the machine of the culture industry. But they themselves become a kind of a target. So what’s next after that?”

Programming Creativity

David Huron believes the better we understand the act of creation, the more successful we are at it: "The more we rely on our intuitions, the more our behaviours may be dictated by unacknowledged social norms or biological predispositions. Intuition is, and has been, indispensable in the arts. But intuition needs to be supplemented by knowledge (or luck) if artists are to break through "counterintuitive" barriers into new realms of artistic expression."

What he is saying is that progression requires understanding of the creative mechanisms, but at what cost? Codifying our thoughts and the essence of creativity is to bottle that quality which most people believe makes us human. Our ability to create art is one of the ways we differentiate ourselves from the rest of the animal kingdom and, so far, the rise of AI.

Music may never prove the existence of a human soul, but it is doing something equally profound. Through researching its effects on the brain, it may force us to redefine our view of what being human is.

Macho Zapp would sincerely like to thank:

Professor Lauren Stewart

Professor Michael Casey

The Night Sea

Iris Mencke

Professor Eduardo Miranda

Professor Chris Chafe

Professor Josef Parvizzi

Maryam Zaringhalam

Lora-Faye Ashuvud